How Do Meta Display Glasses Show Messages Hands-Free?

How Do Meta Display Glasses Show Messages Hands-Free?

Meta's new display glasses promise to bring messages, translations, and contextual information directly into your line of sight—no phone pickup required. If you're asking "how do Meta display glasses show messages hands-free?" this article breaks down what they do, how they work, practical use cases, limitations, and whether they could replace pocketed screens for day-to-day multitasking.

What The Glasses Can Display

At a glance, Meta's display glasses layer lightweight, readable content over the real world. Typical on-screen items demonstrated include:

- Incoming messages: Text previews and sender info that appear in your peripheral vision.

- Translations: Real-time translated subtitles or short translated phrases placed near a speaker or sign.

- Step-by-step instructions: Recipe steps, workout prompts, or assembly guidance that keep your hands free.

- Notifications and contextual cards: Quick actions like replying, calling, or saving content without reaching for your phone.

Why This Feels Different From a Phone

Instead of unlocking a phone and switching apps, the glasses push ephemeral, contextual data directly into a small, glanceable HUD (heads-up display). That reduces friction for micro-tasks: reading a translation while traveling, following a recipe while cooking, or checking a text during a meeting without drawing attention to your hands.

How Do They Work?

Under the hood, the glasses combine micro-displays, sensors, and software to render information. Here are the key components:

- Micro-Displays: Tiny screens or waveguides project text and simple graphics into the wearer’s view.

- Companion Processing: A paired device (or built-in module) decodes notifications, runs translation models, and streams minimal UI data.

- Sensors and Eye Tracking: These help position information in the right spot and manage when content appears or hides based on gaze.

- Connectivity: Bluetooth or Wi-Fi links the glasses to your phone or cloud services so messages and translations update in real time.

Together these systems create the illusion of content floating in front of you without heavy, obstructive lenses.

Practical Setup And Daily Use

Setting up Meta display glasses generally follows these steps:

- Charge the glasses and power them on.

- Install the companion app on your phone and pair the devices.

- Configure notification permissions and translation languages.

- Calibrate the display for comfort and gaze alignment.

Once configured, notifications funnel to the HUD according to rules you set (e.g., only messages from contacts or certain apps). The glasses prioritize short, actionable snippets to avoid overwhelming your vision.

Real Use Cases: When Hands-Free Display Is Truly Helpful

Here are scenarios where seeing messages and translations in your glasses adds real value:

- Cooking: Follow a recipe with each step appearing near your line of sight so you never touch the phone with messy hands.

- Travel: Translate menus or signs instantly without pulling out a translator app.

- Workflows: Receive short alerts or meeting prompts while staying focused on your current task.

- Accessibility: Subtitles and simplified prompts can help people with hearing impairments or attention challenges.

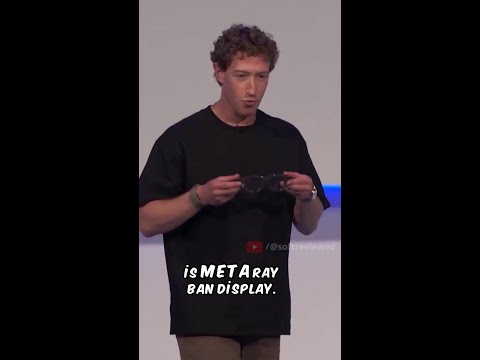

If you want to see a quick hands-on demo, check the short clip that shows messages and translation overlays in action on YouTube: watch the short demo here.

Limitations And Privacy Considerations

Despite the promise, there are meaningful constraints to know about:

- Battery Life: Continuous display and wireless connectivity affect runtime. Meta likely balances brightness and update frequency to extend battery life.

- Field of View: HUDs are intentionally modest to avoid obstructing the real world, which limits how much information can be shown at once.

- Privacy: Messages visible in your field of view could be seen by people nearby if glance angles align; always check what appears when you’re in public.

- Dependency on Companion Device: Many features rely on a paired phone or cloud services for heavy lifting like translation models or message syncing.

Tips For Responsible Use

To get the most from display glasses without frustration:

- Limit notifications to essentials; use filters so only important messages appear.

- Adjust brightness and text size for comfort in different lighting.

- Use privacy modes or quick-hide gestures when in sensitive settings.

Should You Replace Your Phone With Glasses?

Not yet. Right now, display glasses are excellent for micro-interactions and situational assistance, not for full-screen browsing, long-form typing, or heavy media consumption. Think of them as a complementary interface that reduces certain interruptions and accelerates hands-free tasks.

See It For Yourself

Words describe the concept, but a short visual demo makes the UX obvious. Below is an embedded clip that shows how messages and translations appear in front of your eyes. Watch it to judge readability, timing, and how unobtrusive the overlays feel.

After watching, consider your daily tasks: would quick, glanceable messages and translations improve your workflow or just add another device to manage?

Final Thoughts

Meta’s display glasses are a meaningful step toward ambient computing: blending digital assistance into the real world with minimal friction. They shine for short, contextual interactions like translations and step-by-step guides, but they won’t fully replace smartphones for heavy tasks anytime soon. If your days involve frequent micro-tasks or you value hands-free convenience, they’re worth exploring.

Ready to see it in action? 🎬

Watch the full, detailed guide on YouTube to master this technique!

Click here to watch now!

Comments

Post a Comment